Much research has been dedicated to leveraging street view imagery in combination with other data-sources such as remotely sensed imagery or crowd-sourced information to map particular types of objects or areas. This incredible amount of image data allows one to address a multitude of mapping problems by exploring areas remotely, thus dramatically reducing the costs of in situ inventory, mapping, and monitoring campaigns. Tens of billions of street view panoramas covering millions of kilometers of roads and depicting street scenes at regular intervals are available.

This imaging modality is referred to as street view, street-level, or street-side imagery.

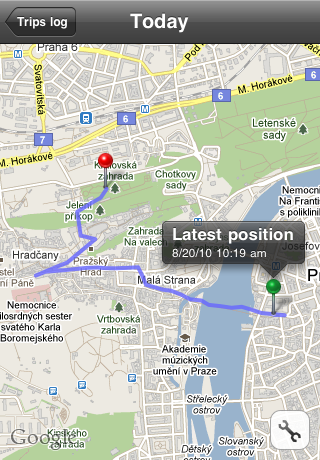

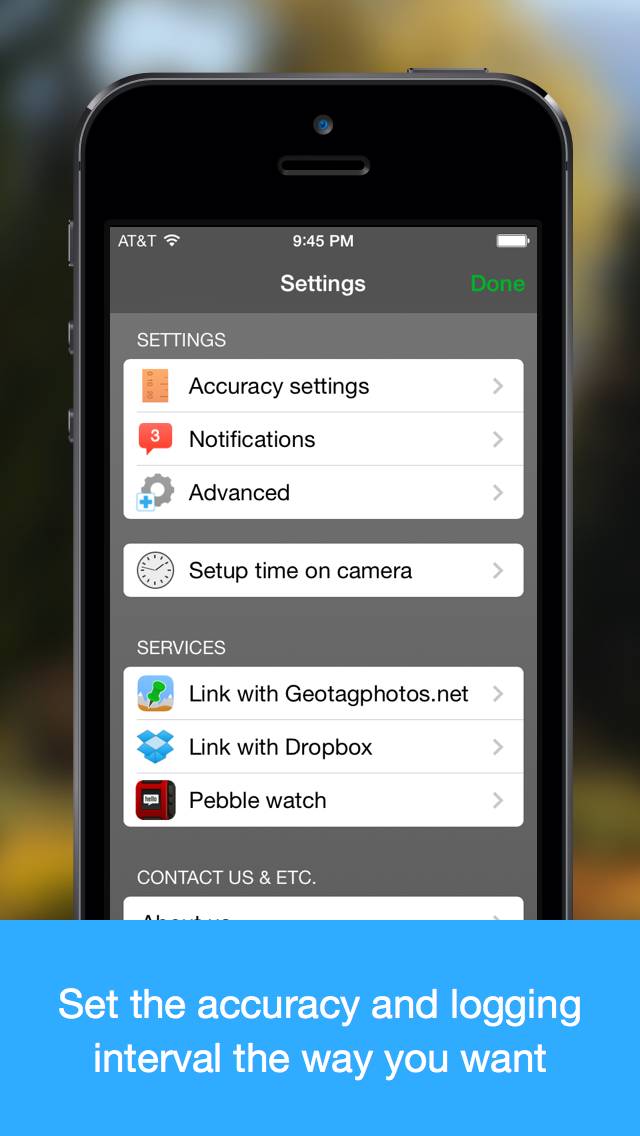

In particular, 360 ∘ time-stamped geolocated panoramic images captured by cameras mounted on vehicles or carried by pedestrians are publicly accessible from GSV, Bing Streetside, Mapillary, OpenStreetCam, etc. Image databases with Global Positioning System (GPS) information, such as Google Street View (GSV) and images posted on social networks like Twitter, are regularly updated, provide dense coverage of the majority of populated areas, and can be queried seamlessly using APIs. The rapid development of computer vision and machine learning techniques in recent decades has excited the ever-growing interest in automatic analysis of huge image datasets accumulated by companies and individual users all worldwide. The experiments report high object recall rates and position precision of approximately 2 m, which is approaching the precision of single-frequency GPS receivers. We validate experimentally the effectiveness of our approach on two object classes: traffic lights and telegraph poles. The novelty of the resulting pipeline is the combined use of monocular depth estimation and triangulation to enable automatic mapping of complex scenes with the simultaneous presence of multiple, visually similar objects of interest. To geolocate all the detected objects coherently we propose a novel custom Markov random field model to estimate the objects’ geolocation. Our processing pipeline relies on two fully convolutional neural networks: the first segments objects in the images, while the second estimates their distance from the camera. In this paper, we propose the automatic detection and computation of the coordinates of recurring stationary objects of interest using street view imagery. Many applications, such as autonomous navigation, urban planning, and asset monitoring, rely on the availability of accurate information about objects and their geolocations.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed